Netflix Software Engineer Phone Screen Questions

28+ questions from real Netflix Software Engineer Phone Screen rounds, reported by candidates who interviewed there.

What does the Netflix Phone Screen round test?

The Netflix phone screen typically lasts 45-60 minutes and evaluates core Software Engineer fundamentals. Candidates should expect 1-2 algorithmic problems, basic system design discussion at senior levels, and questions about relevant experience. The goal is to confirm technical competence before bringing candidates onsite.

Top Topics in This Round

Netflix Software Engineer Phone Screen Questions

Online application -> HR contacted -> Asked about Faye Wong's culture and his proudest project. First round in-store interview -> Implement a key-value pair that can expire -> Follow-up question: How

Netflix L4 Software Engineer Remote Interview Experience and Questions

**Round 1** * **Recruiter Call (30 min)** * **Technical Phone Screen (60 min):** The problem required implementing a rate limiter that allows specific X requests every Y seconds from a single client.

Netflix | Phone screen

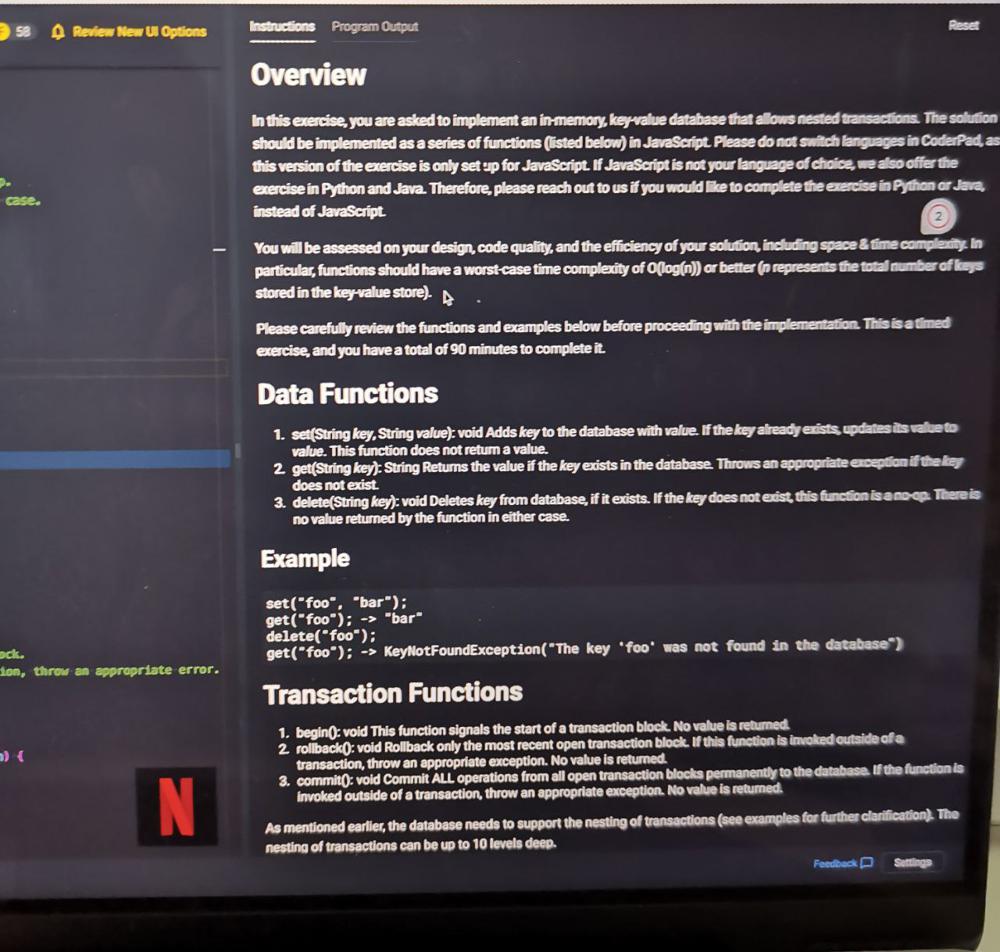

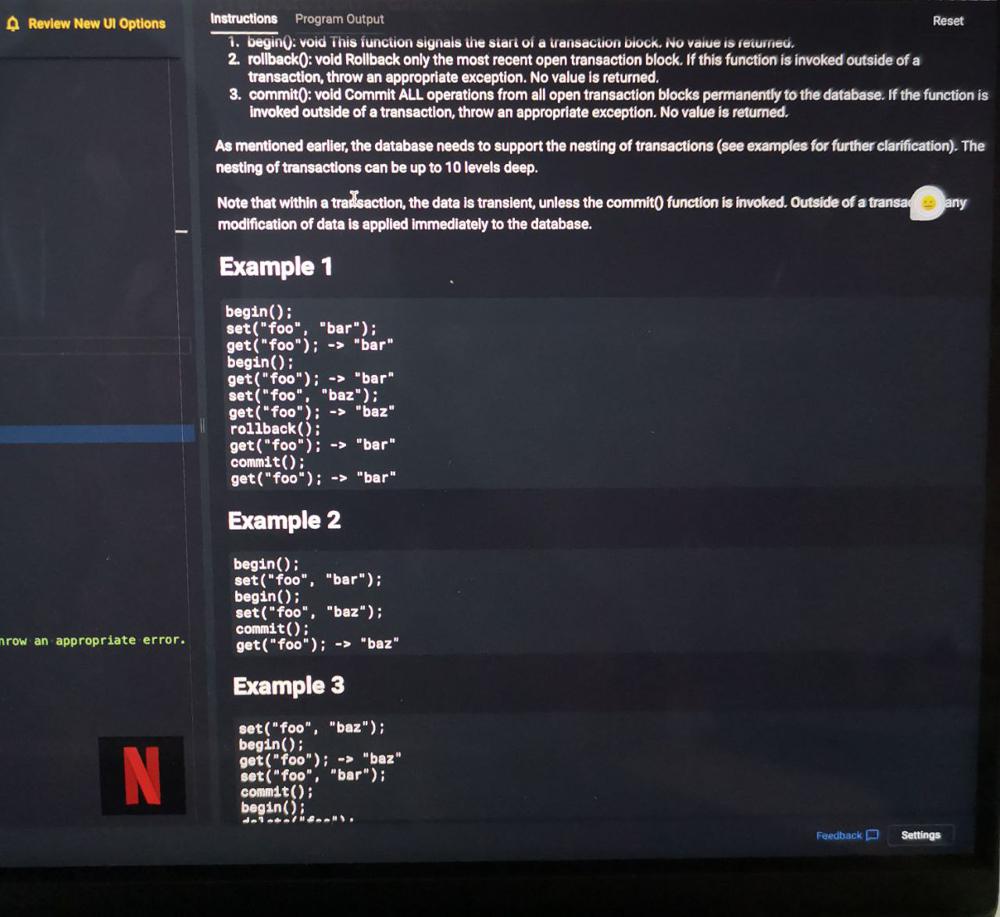

I recently had a technical interview over the phone with Netflix. Basically the same question: https://leetcode.com/discuss/interview-question/279913/bloomberg-onsite-key-value-store-with-transactions   Interviewer wanted me to solve this question using JS. I came up with the map...

Hubspot - sse2

round 1 : q - given a string s = "aabbaaa", k =2 , find string of length k which occurs in s most no of times. ans - aa round...

Hubspot | Senior Software Engineer | Berlin | Reject

Round 1 - Algorithm round Provided an API key to fetch the data(json) from a url. This data contains the list of partners and their countries and on which dates they...

Geico | Remote | SDE II | Virtual Onsite Interview

Company: Geico Position: SDE II Salary: $155,000 RSU: None offered Sign On: $15,000 Location: Fully Remote US Status: Offer Virtual Onsite Rounds: Coding Round 1 (30 mins): Similar to: Transform number with duplicate digits into the next highest...

EPAM | Senior Software Developer | Hyderabad | Dec 2023 [Passed]

Status: IN3, Walmart Global Tech, Bengaluru - 6yrs overall experience Position: Senior Software Developer at EPAM Location: Hyderabad, India Date: Dec, 2023 Round 1: Introduction: Gave a brief introduction about my experiences. Java-Related Questions: JVM, Heap memory,...

[PS] [Interview #5] Parspec Interview experience

Interviewing for Role Software Architect | Bengaluru | November 2023 Current Stats Status: Lead Engineer at B2B based startup Location: Bengaluru Interview process: Round-1: Exploratory Round a. Phone Interview call with CTO b. Discussion...

Phonepe|SDE-Tools Internship (Oncampus)| June 2022

Hello! this was my experience being interviewed by Phonepe for their SDE-Tools for network infrastructure role . Status: Fresher (Graduating in 2023) | Tier 2 College in India Experience: 6 month internship...

SAP Labs | SSE | Bangalore

Round 1: - Write an efficient function that takes stockPrices and returns the best profit I could have made from one purchase and one sale of one share of SAP stock...

Wayfair Software Engineer Interview

Status: Software Developer with 2 years of full time Experience School: The University of Akron Wayfair Software Engineer Interview I recently went through a Wayfair interview and wanted to contribute the questions...

## Problem Given a set of numbers and arithmetic operations, determine if a target value can be reached. Likely involves expression generation or backtracking. ## Likely LeetCode equivalent No direct match. ## Tags math, backtracking, coding

## Problem Implement a command history system (like a shell undo/redo or browser history) supporting navigation through previous commands. Likely uses two stacks or a deque. ## Likely LeetCode equivalent No direct match. ## Tags stack, design, coding

Content Management System: Design a CMS with Versioned Articles, Tags, and Publishing Workflow

## Round 1 - Coding ## Problem Design a lightweight CMS class that manages articles through a publishing workflow: - `create_article(title, body, author_id, tags)` -> `article_id` - `edit_article(article_id, body)` — save as a new version; preserve all previous versions. - `publish_article(article_id)` — transition from `DRAFT` to `PUBLISHED`. - `get_article(article_id, version=None)` — return article at given version, or latest if None. - `search_by_tag(tag)` — return all published articles with that tag. ```python class CMS: def create_article(self, title: str, body: str, author_id: str, tags: list[str]) -> str: ... def edit_article(self, article_id: str, new_body: str) -> int: # returns new version number ... def publish_article(self, article_id: str) -> None: ... def get_article(self, article_id: str, version: int | None = None) -> dict: ... def search_by_tag(self, tag: str) -> list[dict]: ... ``` ``` id = cms.create_article("Intro", "v1 body", "u1", ["python"]) cms.edit_article(id, "v2 body") -> version 2 cms.publish_article(id) cms.get_article(id, version=1)["body"] -> "v1 body" cms.search_by_tag("python") -> [latest published article] ``` ## Follow-ups 1. How do you store versions efficiently? (Full snapshots vs diff/delta) 2. What prevents a `PUBLISHED` article from being edited back to `DRAFT`? Where does that state machine live? 3. `search_by_tag` must also support multi-tag AND queries. How do you implement tag intersection? 4. How would you implement a scheduled publish (article goes live at a future timestamp)?

## Problem Given a list of strings, count the number of pairs `(i, j)` where `i < j` and the two strings share no common characters. ```python def count_disjoint_pairs(words: list[str]) -> int: ... ``` ``` Input: words = ["abc", "de", "fg", "abcd"] Pairs: ("abc","de"): no overlap -> count ("abc","fg"): no overlap -> count ("abc","abcd"): overlap 'a','b','c' -> skip ("de","fg"): no overlap -> count ("de","abcd"): overlap 'd' -> skip ("fg","abcd"): overlap -> skip Output: 3 ``` ## Follow-ups 1. Represent each word as a bitmask of 26 bits (one per letter). How does this make the disjoint check O(1)? 2. With the bitmask approach, what is the overall time complexity? How does it compare to using a set intersection? 3. Can two different words share the same bitmask? Give an example. Does this cause any issue for your algorithm? 4. Extend: count pairs where the two strings share exactly one common character. How does your bitmask approach need to change?

## Problem You have a list of content titles (article headlines, video titles, etc.). Some are exact duplicates; some are near-duplicates (minor wording differences). Implement: 1. `find_exact_duplicates(titles)` — return groups of titles that are exactly the same (case-insensitive). 2. `find_near_duplicates(titles, threshold)` — return groups of titles whose normalized edit distance is <= `threshold`. Normalize: lowercase, strip punctuation, collapse whitespace. ```python def find_exact_duplicates(titles: list[str]) -> list[list[str]]: ... def find_near_duplicates(titles: list[str], threshold: float) -> list[list[str]]: ... ``` ``` titles = [ "Python Tips for Beginners", "python tips for beginners", # exact dup (case) "Python Tips For Beginners!", # near dup (punct) "Java Tips for Beginners" # different ] find_exact_duplicates(titles) -> [["Python Tips for Beginners", "python tips for beginners"]] find_near_duplicates(titles, 0.1) -> [["Python Tips for Beginners", "python tips for beginners", "Python Tips For Beginners!"]] ``` ## Follow-ups 1. `find_near_duplicates` is O(n^2) with pairwise comparisons. For 100,000 titles, how do you scale this using MinHash or locality-sensitive hashing? 2. What normalization steps matter most for your use case (news titles vs. product names vs. code repo names)? 3. How do you choose the right `threshold`? Describe a labeling + precision/recall evaluation approach. 4. Titles keep arriving as a stream. How do you maintain near-duplicate groups incrementally without reprocessing the full corpus?

## Problem You are given a list of viewing events for a single user. Each event has a `show_id` and a `timestamp`. A "duplicate" viewing occurs when the same `show_id` appears more than once within a 24-hour window of its first occurrence. Return a deduplicated list preserving only the first occurrence of each show within any 24-hour window. ```python from typing import List, Tuple def deduplicate_viewings(events: List[Tuple[str, int]]) -> List[Tuple[str, int]]: # events: list of (show_id, unix_timestamp_seconds) # return: filtered list in original order pass ``` **Example:** ``` Input: [("S1", 1000), ("S2", 1500), ("S1", 86200), ("S1", 87200)] Output: [("S1", 1000), ("S2", 1500), ("S1", 87200)] # S1 at 86200 is within 86400s of S1 at 1000, so dropped. # S1 at 87200 is outside the window, so kept (new window starts). ``` ## Follow-ups 1. What if events can arrive out of order by timestamp? How does your approach change? 2. How would you scale this to process millions of users' events per day in a streaming pipeline? 3. What if "duplicate" is defined per (show_id, season_number) pair instead of show_id alone? 4. How would you emit a count of dropped duplicates per show alongside the filtered list?

Netflix SWE Phone - Event Logger

## Problem Implement an event logging system that records timestamped events and supports queries such as range lookups or count within a time window. ## Likely LeetCode equivalent No direct match. ## Tags coding, design, hash_table

Netflix SWE Phone - Function Timer

## Problem Implement a wrapper or decorator that measures execution time of functions, possibly with aggregation or reporting of stats. ## Likely LeetCode equivalent No direct match. ## Tags coding, design, oop

## Problem You are building the homepage feed for a streaming platform. Given a list of content items and a user's watch history, return the top `k` items to display. Ranking rules (in priority order): 1. Items the user has NOT watched rank above watched items. 2. Among unwatched, sort by `popularity_score` descending. 3. Among watched, sort by `last_watched_timestamp` descending (most recently watched first). ```python from typing import List, Dict def get_homepage(items: List[Dict], watch_history: List[str], k: int) -> List[Dict]: # items: [{"id": str, "title": str, "popularity_score": float}] # watch_history: list of watched item ids # return: top k items as list of dicts pass ``` **Example:** ``` items = [ {"id": "A", "title": "Alpha", "popularity_score": 9.1}, {"id": "B", "title": "Beta", "popularity_score": 8.5}, {"id": "C", "title": "Gamma", "popularity_score": 7.0}, ] watch_history = ["B"] k = 2 Output: [{"id": "A", ...}, {"id": "C", ...}] # A and C are unwatched; B is excluded from top-2 slots. ``` ## Follow-ups 1. How would you incorporate a "freshness" decay so older content loses score over time? 2. What data structure best supports real-time score updates as popularity changes? 3. How would you A/B test two ranking algorithms while keeping the experiment statistically valid? 4. If the user has no watch history, how do you cold-start the ranking?

See All 28 Questions from This Round

Full question text, answer context, and frequency data for subscribers.

Get Access